How to Build a Gigascale AI Network with NVIDIA Spectrum-X and MRC

Introduction

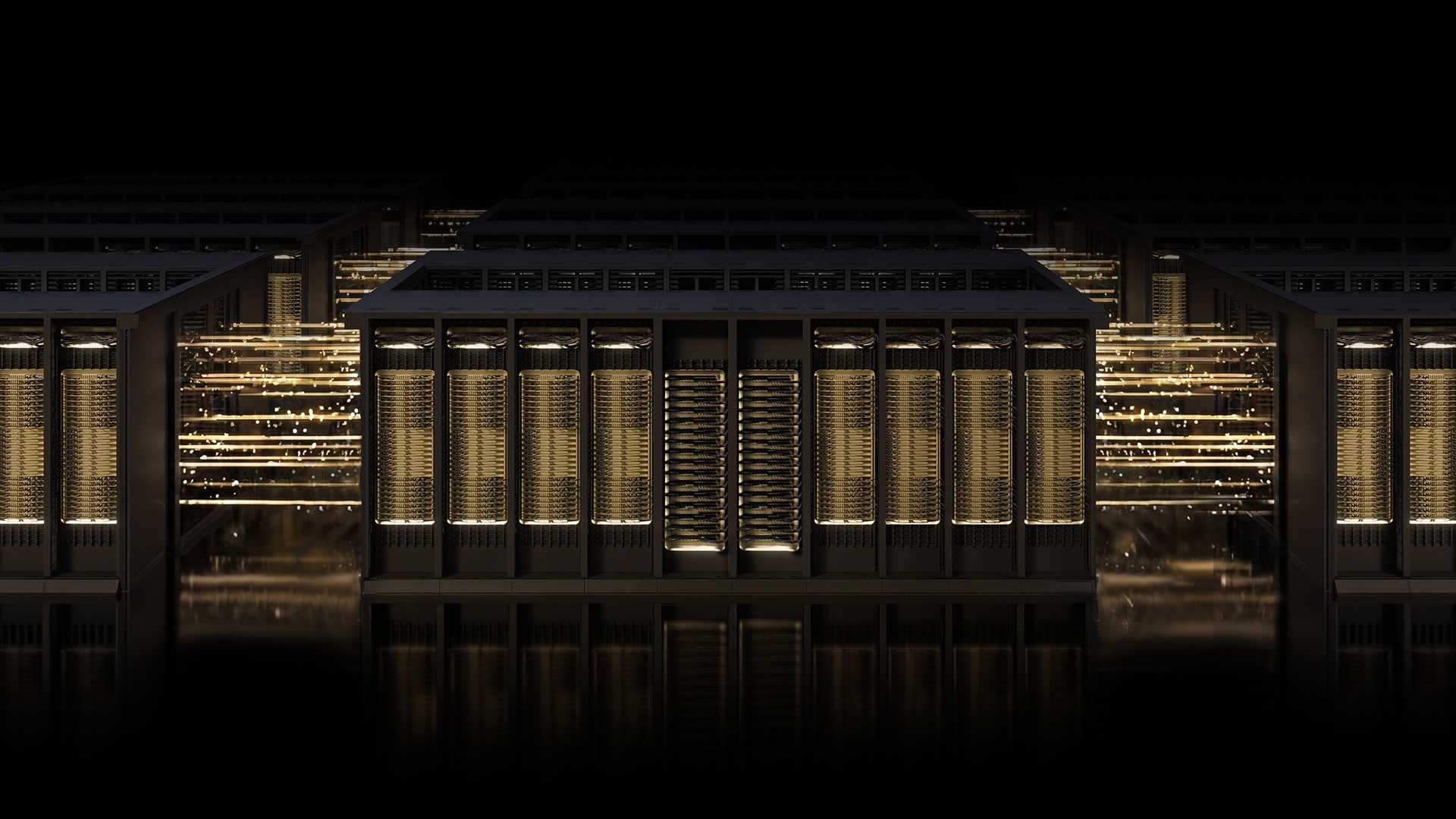

The race to build the world’s most powerful AI factories demands networking that keeps pace with the ambitions of AI itself. NVIDIA Spectrum-X Ethernet scale-out infrastructure stands at the forefront of that race. With the introduction of Multipath Reliable Connection (MRC), an RDMA transport protocol, you can now achieve the throughput, load balancing, and availability required for large-scale AI training. This guide walks you through the key steps to implement MRC on a Spectrum-X fabric, ensuring your AI workloads run without network bottlenecks.

What You Need

- NVIDIA Spectrum-X Ethernet hardware (e.g., Spectrum-4 switches)

- NVIDIA ConnectX-7 or later SmartNICs

- RDMA-capable host systems (GPU servers)

- Open Compute Project (OCP) specification for MRC (available as open standard)

- Network configuration tools (e.g., NVIDIA DOCA software)

- Access to a large-scale AI training cluster (at least 16 GPUs recommended)

- Deep telemetry and fabric control software (NVIDIA Fabric Manager)

Step-by-Step Guide

Step 1: Understand the Role of MRC in AI Networking

Before deploying, grasp how MRC transforms your network. Traditional RDMA uses a single path between endpoints—like a single-lane road. MRC creates a multipath grid: traffic from one RDMA connection is split across multiple physical paths, with real-time rerouting around congestion or failures. This directly increases GPU utilization and reduces training interruptions. As demonstrated by OpenAI, Microsoft, and Oracle, MRC eliminates network slowdowns common in frontier training runs.

Step 2: Prepare Your Spectrum-X Hardware and Firmware

Ensure your switches and NICs are MRC-ready. Update to the latest firmware that supports the MRC transport protocol. NVIDIA Spectrum-X Ethernet hardware is purpose-built for this: enable P4-programmable data plane and advanced RoCE (RDMA over Converged Ethernet) features. Verify that your ConnectX NICs have the appropriate RDMA capabilities and are configured for multipath operation.

Step 3: Configure the Fabric for Multipath Routing

With MRC, the network fabric must support equal-cost multipath (ECMP) or weighted-CMP at the switch level. Use NVIDIA Fabric Manager to set up non-blocking, full-bisection bandwidth topology. Define multiple disjoint paths between each GPU pair. The Spectrum-X switches allow you to program custom flow tables—leverage OCP’s MRC specification to define path groups and load-balancing weights.

Step 4: Implement the MRC RDMA Transport on Hosts

Install DOCA drivers and library support for MRC on each host. Modify your RDMA application (or use NCCL with MRC backend) to create multipath connections. The protocol handles per-packet load balancing: enable adaptive routing so that traffic dynamically avoids overloaded links. Test with a simple all-reduce benchmark to verify that throughput scales with the number of paths.

Step 5: Enable Intelligent Retransmission and Congestion Control

MRC’s retransmission logic recovers lost packets without stalling the entire connection. Configure the NIC’s selective retransmission timer and set thresholds for congestion detection. Use Spectrum-X telemetry (e.g., ECN marking, PFC) to feed back into the MRC congestion control algorithm. This ensures that even under link-level drops, GPU idle time is minimized.

Step 6: Monitor and Optimize with Telemetry

Pre-built dashboards in NVIDIA Fabric Manager provide fine-grained visibility: per-flow path usage, congestion hot spots, and retransmission rates. Set up alerts for any imbalance. Use these insights to adjust multipath weights or add redundant links. Administrators can quickly troubleshoot issues by identifying exactly which path caused a delay.

Step 7: Scale to Gigascale AI Production

Once validated on a small cluster, expand to hundreds or thousands of GPUs. MRC is designed for linear scaling—performance gains increase with more paths. Implement the same configuration across all racks. For large deployments like Microsoft Fairwater or Oracle Abilene, rely on Spectrum-X’s automated fabric orchestration to maintain MRC path definitions as the cluster grows.

Tips for Success

- Start with a validation cluster: Before deploying at scale, test MRC with at least 16 GPUs in a non-blocking leaf-spine topology. Measure baseline GPU utilization with and without MRC.

- Handshake with your RDMA stack: MRC works best with NCCL 2.18+ or custom transports. Verify that your training framework (PyTorch, JAX) can utilize multipath RDMA.

- Monitor path diversity: If your physical topology doesn’t provide enough disjoint paths, consider adding redundant links or moving to a higher-radix switch like Spectrum-4.

- Watch for tail latency: MRC reduces average latency but can introduce tail outliers if paths are too asymmetrical. Use telemetry to trim uneven routes.

- Stay updated with OCP: The MRC specification is open—check for updates that add new features like in-network computing or better fairness.

Related Articles

- Bluetooth Tracker in Postcard Exposes Naval Security Gap

- How NVIDIA Spectrum-X and MRC Are Redefining AI Networking at Giga-Scale

- 10 Key Insights Into the Smartphone Price Surge: RAM Crisis Hits OnePlus, Nothing, and More

- Rethinking Man Pages: A Guide to Clearer Command Documentation

- How to Discreetly Embed a Bluetooth Tracker in a Postcard for Mail Tracking

- 7 Things You Need to Know Before Buying the New Moto Razr Ultra

- Apple Raises Mac Mini Price: Entry-Level Model Discontinued Amid Chip Constraints

- How to Make an Informed Decision on the New Mac Mini Price Increase