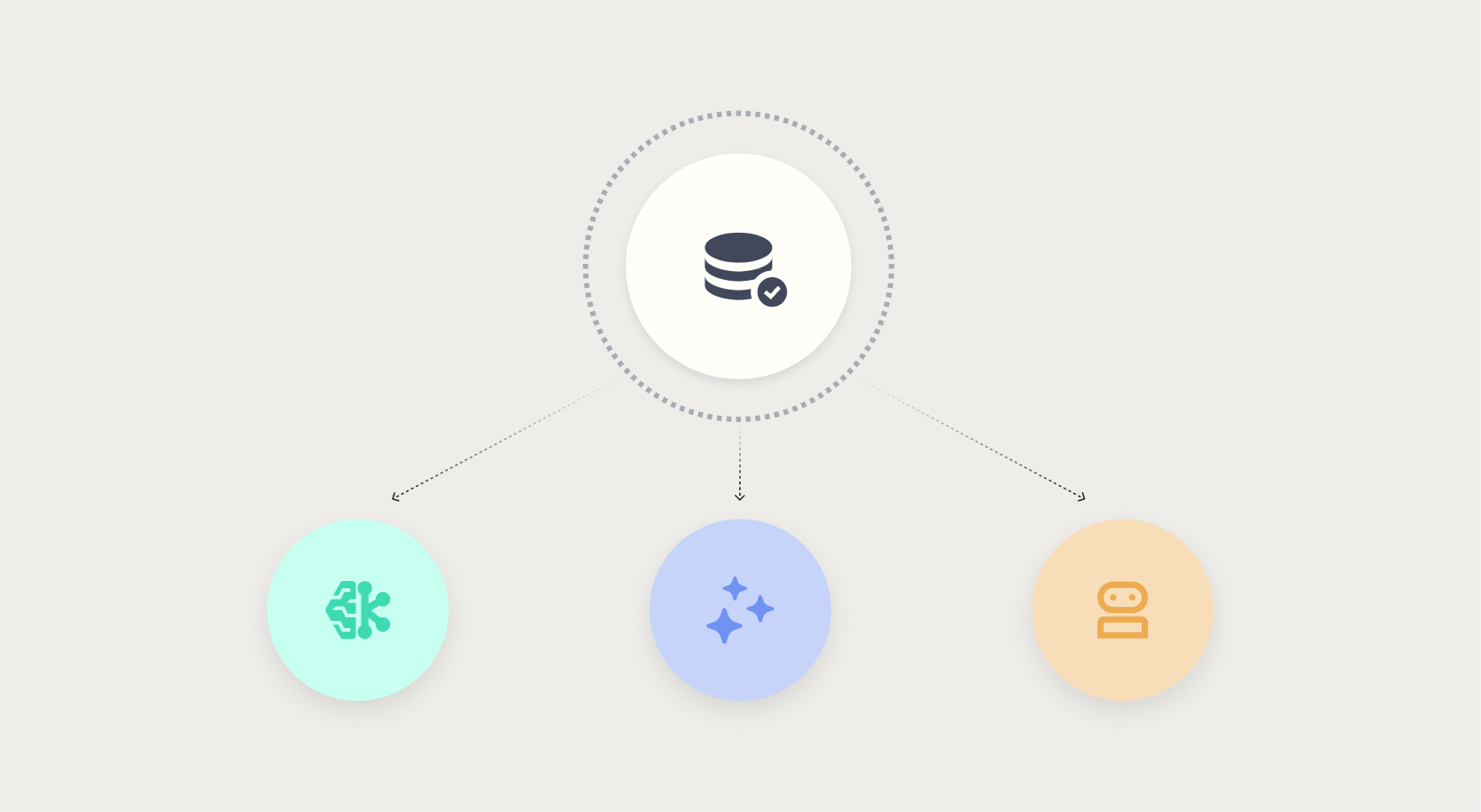

Data quality: The hidden bottleneck in machine learning and AI systems

Introduction: When clean data turns dirty

No one deliberately builds a bad model. Yet every day, machine learning projects stall, drift, or fail silently in production—not because of flawed algorithms, but because of data that looked clean until it wasn’t. By the time a defect is discovered, a pricing model may have already shipped a $2.3 million margin shortfall, a chatbot might have delivered confident wrong answers to customers, or an autonomous agent could have committed budget on incomplete supplier data. Poor data quality is the most common reason AI initiatives fail to deliver value.

Traditional machine learning: Visible failures, contained damage

In classic machine learning, data quality problems are at least visible. A dashboard shows an unexpected number, a human analyst spots the anomaly, and the model is retrained. The damage is contained. This familiar relationship between ML and data quality allows organizations to catch errors before they spread. However, as AI evolves, that containment breaks down.

Generative AI: The confidence trap

Generative AI systems, such as large language models and chatbots, introduce a new class of data quality risks. When a chatbot pulls from a stale knowledge base, it delivers a confident, wrong answer—with no signal that anything is amiss. The system operates exactly as designed, but on data that was never fit for purpose. The damage is invisible and can erode customer trust before anyone notices.

Why traditional monitoring fails

Conventional data quality checks—like null counts, range validation, or schema conformity—do not catch semantic drift. A knowledge base may be perfectly formatted and complete, yet factually outdated. Without continuous validation against ground truth, generative models amplify errors that are indistinguishable from correct outputs.

Agentic AI: Action without oversight

The shift from prediction to autonomous action makes data quality failures even more dangerous. An autonomous procurement agent that commits budget on incomplete supplier data may finalize a contract before any human reviews it. Unlike a forecasting model whose errors remain on a dashboard, agentic systems act on bad data directly—and the consequences are real and immediate.

The convergence of speed and risk

Agentic AI operates at machine speed. Once a decision is made, reversing it is costly or impossible. Traditional data quality gates—designed for batch processing and manual review—cannot keep pace. The tolerance for bad data drops to near zero, yet the ability to detect it before action is severely limited.

Building a data quality framework for the age of AI

Organizations that want to deploy generative and agentic AI safely must rethink their approach to data quality. The following strategies can help:

Implement real-time data validation

Move beyond periodic batch checks. Use streaming validation that flags inconsistencies as data enters the system. For agentic workflows, validate not just the input data but also the decision context—ensuring that every action is backed by current, fit-for-purpose data.

Continuous monitoring of model outputs

Monitor not just data inputs but also model outputs. For generative AI, compare responses against a verified knowledge base in near-real time. For agentic systems, log all actions and flag anomalies—e.g., a procurement agent that suddenly changes its spending pattern without a clear data trigger.

Adopt a lineage-first data culture

Every data point that feeds an AI model—especially autonomous agents—should have a clear lineage: its source, transformation history, and freshness. When a failure does occur, lineage allows teams to trace back to the root cause quickly and stop the bleeding.

Human-in-the-loop for high-stakes decisions

For agentic AI, design guardrails that require human approval for actions above a certain risk threshold. This buys time to validate data quality before irreversible consequences occur. Over time, as data quality improves, the level of autonomy can increase.

Conclusion: From silent failure to robust trust

The pattern is clear: as AI moves from prediction to action, the tolerance for data quality failures shrinks, and the ability to catch those failures before damage becomes harder. Generative and agentic AI break the containment that made traditional ML failures manageable. The only way forward is to embed data quality into every layer of the AI pipeline—from ingestion to output. By doing so, organizations can turn silent failures into trust, and bad data into a competitive advantage.

Related Articles

- Q&A: Industrial Automation Threat Landscape in Q4 2025 – Trends and Key Threats

- Mastering Transparency in Agentic AI: A Practical Guide to the Decision Node Audit

- 10 Key Insights into Khosla-Backed Genesis AI's Robotics Revolution

- NVIDIA and ServiceNow Unveil Autonomous AI Agents for Enterprise Workflows

- Why I Switched from Raspberry Pi to $5 ESP32 for Smart Home Automation

- Defending Against IoT Botnet Threats: A Comprehensive Guide Inspired by the Aisuru-Kimwolf Takedown

- 7 Key Ways NVIDIA and ServiceNow Are Revolutionizing Enterprise AI with Autonomous Agents

- DAIMON Robotics Unveils Daimon-Infinity: A Giant Leap in Robotic Touch